Using computer vision to measure water clarity

Starting in December last year I have been undertaking an internship at AusOcean. Growing up surrounded by the beautiful oceans, on both the Eyre and Yorke Peninsula, has given me a large appreciation for these environments. Being given the opportunity to work in a place where I can actively help to support these environments was an amazing experience. I was primarily involved in working on ways to measure water clarity using computer vision. I also developed a way of reporting live sensor data from our rigs to the YouTube live streams. This report outlines my journey over the last 3 months.

The internship began in early December where I was joined by two other interns Alex and McKenzie. My first week involved learning the basics of the programming language Go and deciding on our projects. I had recently completed my Bachelor of Computer Science, majoring in AI and was keen to work on a project where I could best develop my skills around computer vision and machine learning. AusOcean wanted to develop a way of measuring water clarity and turbidity on their rigs. This would provide a measure for visibility which would be useful for divers, as well as a way to measure the change in turbidity over time. Using computer vision would provide a scalable solution that could utilize AusOcean’s current underwater camera technology.

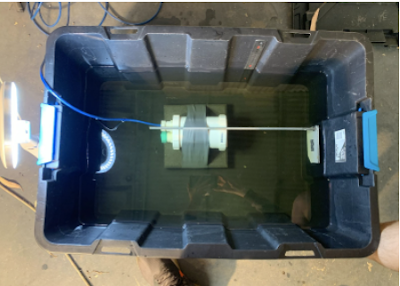

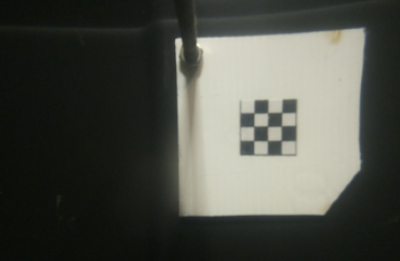

After familiarizing myself with the codebase and solving some basic issues I got to work on my project. The freedom was initially overwhelming. I began by trying to better understand the problem. This involved reading some papers and the work completed by previous interns until I reached a point where I felt confident enough to make a start. I found a paper that outlined an approach I felt could be applicable to AusOcean. The full paper can be found here. Put briefly, the approach evaluates the sharpness and contrast of a target image which is placed into the field of view of the camera. The sharpness and contrast scores can then be correlated to the approximate visibility in metres or turbidity. The target image could theoretically be anything but images that already have a high sharpness and contrast will work better. I chose to use a black and white chessboard-like target. Because GoCV provides an out the box implementation to detect chessboards.

The internship began in early December where I was joined by two other interns Alex and McKenzie. My first week involved learning the basics of the programming language Go and deciding on our projects. I had recently completed my Bachelor of Computer Science, majoring in AI and was keen to work on a project where I could best develop my skills around computer vision and machine learning. AusOcean wanted to develop a way of measuring water clarity and turbidity on their rigs. This would provide a measure for visibility which would be useful for divers, as well as a way to measure the change in turbidity over time. Using computer vision would provide a scalable solution that could utilize AusOcean’s current underwater camera technology.

After familiarizing myself with the codebase and solving some basic issues I got to work on my project. The freedom was initially overwhelming. I began by trying to better understand the problem. This involved reading some papers and the work completed by previous interns until I reached a point where I felt confident enough to make a start. I found a paper that outlined an approach I felt could be applicable to AusOcean. The full paper can be found here. Put briefly, the approach evaluates the sharpness and contrast of a target image which is placed into the field of view of the camera. The sharpness and contrast scores can then be correlated to the approximate visibility in metres or turbidity. The target image could theoretically be anything but images that already have a high sharpness and contrast will work better. I chose to use a black and white chessboard-like target. Because GoCV provides an out the box implementation to detect chessboards.

These results showed some promise. The increased almond milk did decrease the contrast and saturation. The next challenge was to integrate my computer vision code into the streaming client software. I decided to make a start on my secondary project. This was a smaller project and involved working on a way to send sensor data, namely water temperature, to our live stream at Rapid Bay. While being a smaller project, this was a rewarding challenge. After the mistake of using a 1-second message interval instead of 1 minute, resulting in hundreds of messages being sent to the Rapid Bay live stream, the project was completed and received some positive feedback from divers.

Returning to the water clarity project, the challenge I faced was developing a way to get the computer vision code to run, while also constantly sending video data for streaming. The score calculation involved a lot of computations that delayed video data being sent. Initially, I thought to make the computer vision code run in parallel with the streaming software. This did not help, most likely due to the Raspberry Pi only having a single core, meaning it cannot really execute two processes at once. Instead, the solution was to improve the efficiency of the computer vision code as well as improve the video decoding process. After resolving these issues, I was successfully able to run my computer vision code calculation in around 1 second and maintain constant streaming. This is the minimum point where the software could now be deployed onto the AusOcean underwater cameras for further testing. While I would love to continue this project, it was here I reached the end of the internship period.

Overall, this internship was an amazing experience where I got to meet some fantastic people who were always happy to help me learn. This internship challenged me in a way that helped me to become a better engineer overall. I learned about problem-solving, innovation, software design and implementation. I am proud to have contributed to the improvement of ocean monitoring and played my small part in helping our beautiful oceans.

Russell Stanley

BCompSc (Honours)

Returning to the water clarity project, the challenge I faced was developing a way to get the computer vision code to run, while also constantly sending video data for streaming. The score calculation involved a lot of computations that delayed video data being sent. Initially, I thought to make the computer vision code run in parallel with the streaming software. This did not help, most likely due to the Raspberry Pi only having a single core, meaning it cannot really execute two processes at once. Instead, the solution was to improve the efficiency of the computer vision code as well as improve the video decoding process. After resolving these issues, I was successfully able to run my computer vision code calculation in around 1 second and maintain constant streaming. This is the minimum point where the software could now be deployed onto the AusOcean underwater cameras for further testing. While I would love to continue this project, it was here I reached the end of the internship period.

Overall, this internship was an amazing experience where I got to meet some fantastic people who were always happy to help me learn. This internship challenged me in a way that helped me to become a better engineer overall. I learned about problem-solving, innovation, software design and implementation. I am proud to have contributed to the improvement of ocean monitoring and played my small part in helping our beautiful oceans.

BCompSc (Honours)

AusOcean is a not-for-profit ocean research organisation that supports open source practices. Open source approaches to tackling environmental issues means embracing collaborative tools and workflows which enables processes and progress to be fully transparent. A critical aspect of working open is sharing data not only with your immediate team but with others across the world who can learn, adapt and contribute to collective research. By contributing to, and supporting open practices within the scientific community, we can accelerate research and encourage transparency. All tech assembly guides can be found at https://www.ausocean.org/technology

Follow us on Instagram, Facebook or Twitter for more photos, videos and stories.

Comments

Post a Comment